May 24, 2023

Whole Brain Emulation

Posted by Logan Thrasher Collins in categories: existential risks, mapping, neuroscience, robotics/AI

I had an amazing experience at the Foresight Institute’s Whole-Brain Emulation (WBE) Workshop at a venue near Oxford! For more information and a list of participants, see: https://foresight.org/whole-brain-emulation-workshop-2023/ I had the opportunity to work within a group of some of the most brilliant, ambitious, and visionary people I’ve ever encountered on the quest for recreating the human brain in a computer. We also discussed in depth the existential risks of upcoming artificial superintelligence and how to mitigate these risks, perhaps with the aid of WBE.

My subgroup focused on exploring the challenge of human connectomics (mapping all of the neurons and synapses in the brain).

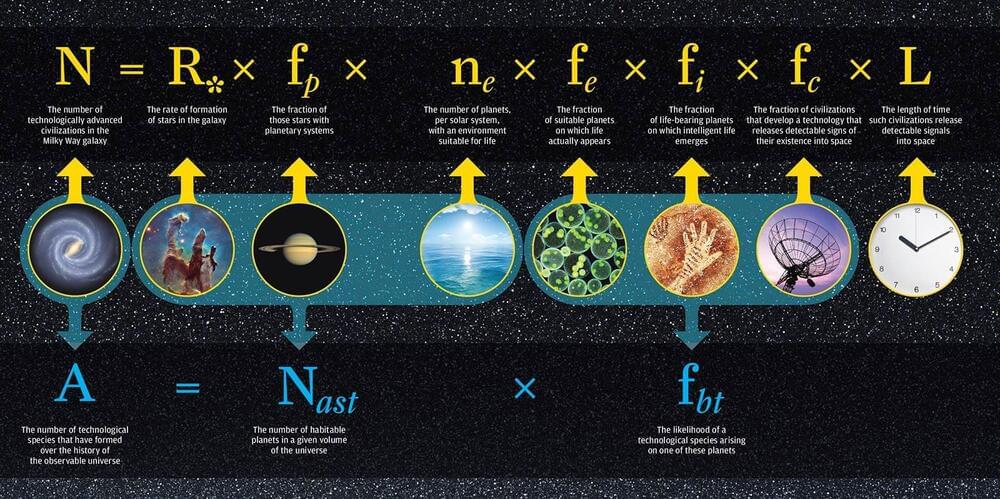

WBE is a potential technology to generate software intelligence that is human-aligned simply by being based directly on human brains. Generally past discussions have assumed a fairly long timeline to WBE, while past AGI timelines had broad uncertainty. There were also concerns that the neuroscience of WBE might boost AGI capability development without helping safety, although no consensus did develop. Recently many people have updated their AGI timelines towards earlier development, raising safety concerns. That has led some people to consider whether WBE development could be significantly speeded up, producing a differential technology development re-ordering of technology arrival that might lessen the risk of unaligned AGI by the presence of aligned software intelligence.